Published 2025-10-06.

Last modified 2026-03-03.

Time to read: 26 minutes.

llm collection.

- Claude Code Is Magnificent, But Claude Desktop Is a Hot Mess

- Gemini vs. Sonnet 3.5 and 4.6 for Meticulous Work

- Google Gemini Code Assist

- Google Antigravity

- Aider: AI Pair Programming in Your Terminal

- AI Planning vs. Waterfall Project Management

- Best Local LLMs for Coding

- Running an LLM on the Windows Ollama app

- Early Draft: Multi-LLM Agent Pipelines

- MiniMax-M2 and Mini-Agent Review

- MiniMax Web Search with ddgr

- LLM Societies

- CodeGPT

Gemini Code Assist has come a long way since this article was first

written. I believe Gemini Code Assist now generates the best code available

from an LLM, better than Anthropic Opus 4.6, for example. The next generation

is already taking shape, although it is not ready for general usage: Google Antigravity.

In this extensive rewrite, most of the old information has been replaced with new.

The article is several times larger now.

Thus most of this article was written on 2026-02-14.

Rewrite continues.

TL;DR: This CLI and LLM combination is the fastest, most capable, most efficient agentic programmer I have tried. But it only works on one Visual Studio project at a time. If that is not a problem for you, then, wow! This product went from bleah to wow in four months.

Overview

Gemini Cloud Assist (GCA) helps cloud teams design, operate, and optimize application lifecycles within the Google Cloud console and infrastructure. GCA is Google’s main AI-powered developer tool. It integrates into IDEs like VS Code and IntelliJ to provide code completion and chat assistance. GCA is free for individuals with generous usage limits with a personal Google account.

2026-03-26: for the last few days, ever since gemini-3.1-pro

came out , free usage of all agentic Gemini models is essentially

broken. The service will probably have a miraculous healing in the next week

or two. This tends to happen after every new version is released; free users

get stiffed for a bit, to encourage them to pay. It worked on me.

Gemini CLI is an open-source AI terminal agent. It brings GCA’s capabilities to the command line, and powers the Agent Mode found within Gemini Code Assist in VS Code.

Gemini CLI and Gemini Code Assist share the same remote Google Gemini LLM.

The free version of GCA is limited to a 32 KB context window, while the paid versions provide at least a 1MB context window. This enormous context window is essential for complex code. Unlike the large context window advertised for Opus 4.6, this large context window works well and does not cost a huge pile of money extra.

The broader ecosystem is generally referred to as Gemini for Google Cloud. Components within this ecosystem have distinct roles:

- The Gemini Code Assist Standard and Enterprise editions provide the quotas used to run the Gemini CLI.

- Gemini Cloud Assist features, such as Investigations for root-cause analysis, are often included as premium additions for Gemini Code Assist Enterprise subscribers.

There is no offline mode, so Google Gemini requires an internet connection and Google account credentials to use the model.

As an early-stage technology, Gemini Code Assist can generate output that seems plausible but is factually incorrect. We recommend that you validate all output from Gemini Code Assist before you use it. For more information, see Gemini Code Assist and responsible AI.

Gemini Code Assist provides citation information when it directly quotes at length from another source, such as existing open-source code. For additional information, see How and when Gemini cites sources.

[You can] use Gemini Code Assist agent mode as a pair programmer in your integrated development environment (IDE).

With agent mode, you can do any of the following and more:

- Ask questions about your code.

- Use context and built-in tools to improve generated content.

- Configure MCP servers to extend the agent’s abilities.

- Get solutions to complex tasks with multiple steps.

- Generate code from design documents, issues, and TODO comments.

- Control the agent behavior by commenting on, editing, and approving plans and tool use during execution.

Caution: There isn't an option to undo changes made to resources outside your IDE in agent mode, so be careful where you use it.

You can use the coreTools and excludeTools

settings to control which tools Gemini has access to in agent mode. The Gemini documentation has additional information.

To learn more about Gemini Code Assist capabilities, see the Gemini Code Assist documentation. To learn more about Gemini CLI, see the Gemini CLI documentation.

In just four months, GCA transformed from what I described as “an overconfident, over-eager idiot savant that cannot reason, makes many assumptions that it does not verify, and simplifies problems by imagining alternate realities” to something more useful.

Agent Features

Gemini Code Assist is best used as an agent.

It issues commands like ReadFile,

Shell, and Build and runs programs like

grep.

It formulates and executes those things for you, with your approval.

Do not release the Kraken. Maintain control of the beast at all times. You should always examine, approve or disapprove every step offered.

Within the Visual Studio Code plugin, toggling agent mode on and off causes a a new session to be initiated with the desired mode. Whatever state you had brought the LLM to will be discarded.

This is the thing that bothers me the most: You cannot continue a conversation with Gemini that you had in a web browser, in Gemini Code Assist. Instead, you must restart the conversation, potentially consuming an exhorbitant amount of energy with every exchange.

Subscribe

- Personal subscriptions start at $20 USD / month after one free month. Annual plans cost 16% less.

- Corporate subscriptions cost $22 USD / month after 14 free days. More services are bundled with this more complex subscription.

- cost (up to 24-hour delay)

- billing support

I was unable to figure out Google's offerings. Their web pages basically just say "Send money".

google ai plus vs ai pro for gca programming using vscode

Here’s a clear comparison of Google AI Plus vs. Google AI Pro specifically for GCA (Gemini Code Assist) programming in VS Code.

Quick Overview of the Plans

Google offers these consumer AI subscriptions (as of 2026):

- Google AI Plus — ~$7.99/month (sometimes discounted for first months) — Entry-level paid tier.

- Google AI Pro — ~$19.99/month (often with a 1-month free trial) — The main paid tier for power users and developers.

Both plans give you access to Gemini Code Assist (the official Google extension for VS Code), but they differ significantly in usage limits, model access, and developer-focused features.

Gemini Code Assist in VS Code

The Gemini Code Assist extension (available on the VS Code Marketplace) provides:

- Inline code completions

- Chat sidebar for explaining/refactoring/generating code

- Smart actions (right-click on code)

- Context-aware assistance on your entire workspace/project

- Support for many languages and frameworks

There is also a free tier of Gemini Code Assist with generous limits (e.g., ~180,000 code completions per month for individuals in some reports), which many casual users find sufficient.

Key Differences for GCA / VS Code Programming

| Feature | Google AI Plus | Google AI Pro | Winner for Coding |

|---|---|---|---|

| Access to Gemini Code Assist | Yes (basic/higher than free) | Yes (full access) | Pro |

| Daily Request Limits (chat, completions, agent mode) | Lower / standard limits | Significantly higher (often 5x or more vs free/Plus) | Pro |

| Gemini CLI (terminal agent, works alongside VS Code) | Limited or not fully supported | Higher limits (shared with Code Assist) | Pro |

| Jules (asynchronous AI coding agent) | Limited or none | Higher limits (good for complex, multi-step tasks) | Pro |

| Context Window (in Gemini app / related tools) | ~128K tokens | Up to 1M tokens (huge for large codebases) | Pro |

| Model Quality for Coding | Enhanced access to Gemini 3.1 Pro (with daily caps) | Higher access to most capable models + priority | Pro |

| Workspace Indexing / Large Projects | Basic | Better performance with higher quotas | Pro |

| Storage Included | 200 GB | 2 TB | Pro (bonus) |

| Other Perks | Gemini in Gmail/Docs, image/video gen | Everything in Plus + deeper Workspace integration | Pro |

| Best For | Light coding, occasional use | Heavy daily programming, large projects, agents | - |

Bottom line for programmers using VS Code:

- AI Plus is okay if you’re a light user or just want a step up from the completely free tier. Limits are higher than free but still restrictive for serious coding sessions (e.g., frequent chat queries, refactoring big files, or using agentic features).

- AI Pro is clearly better for GCA programming. The higher daily quotas on Code Assist, Gemini CLI, and Jules make a noticeable difference when you’re actively building, debugging, or iterating on code all day. The larger context window also helps with big repositories.

Recommendation

- Choose Google AI Pro if:

- You code daily in VS Code.

- You use the chat/agent features a lot.

- You work with large codebases or want to experiment with Jules/Gemini CLI.

-

You’re okay paying ~$20/month for better productivity.

- Choose Google AI Plus (or stick with free) if:

- Your coding is occasional or light.

- Budget is a concern and the free tier’s 180K+ completions feel enough.

- You mainly want the general Gemini app perks + some storage.

Many developers report that the free tier of Gemini Code Assist is already quite generous for personal projects, so test that first (just install the extension and sign in with a Google account). If you hit limits often, upgrade to Pro rather than Plus.

How to Get Started in VS Code

- Install the “Gemini Code Assist” extension from the VS Code Marketplace.

- Sign in with your Google account.

- Your subscription (Free / Plus / Pro) is automatically detected for quota purposes.

- For best results with Pro, restart VS Code after subscribing.

Would you like setup steps, tips for effective prompting in Gemini Code Assist, or a comparison with alternatives like GitHub Copilot? Let me know!

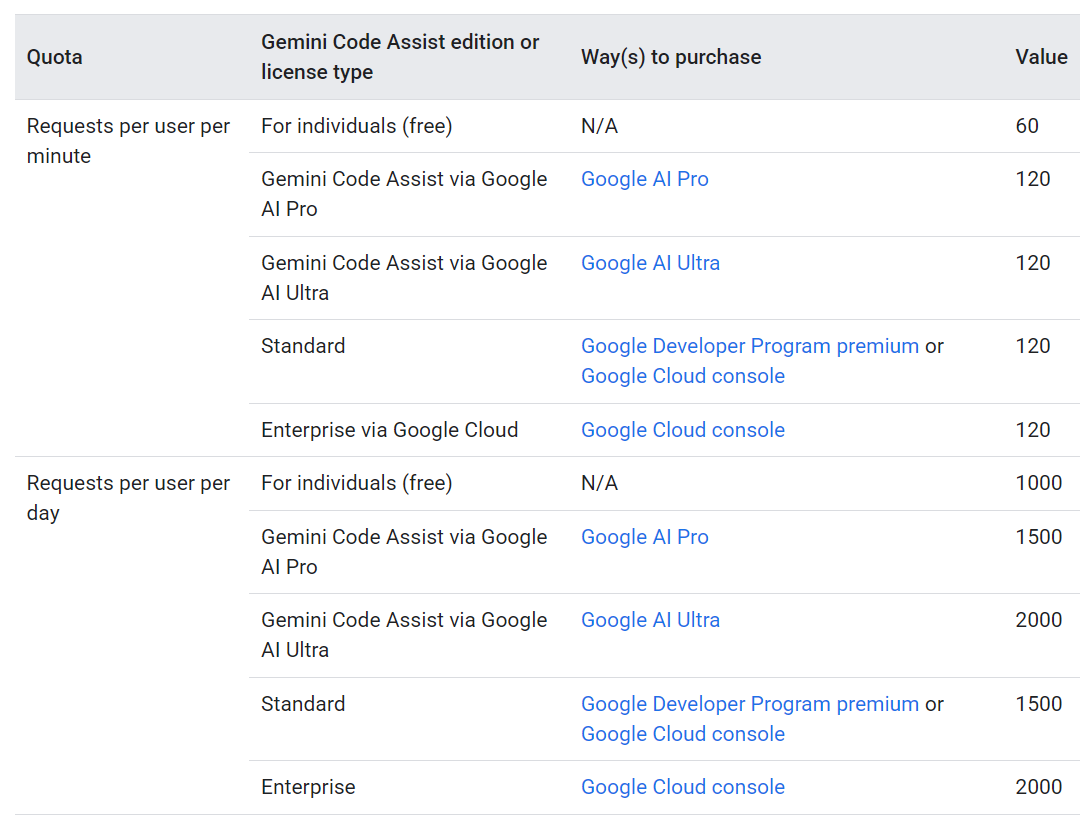

Quotas for Agent Mode and Gemini CLI

Quotas for Gemini are very generous. So generous, in fact, that the free plan for individuals allows me to work hard with Gemini for about 9 hours. Limits reset at noon. I balance my work between a morning session (midnight to noon) and an afternoon session (noon to midnight) so I almost never run out of session tokens.

Quotas for requests from Gemini Code Assist agent mode and Gemini CLI are combined. When in agent mode or when using the Gemini CLI, one prompt might result in multiple model requests.

Gemini's GitHub quota is metered separately, but again it is very generous: up to 33 pull request reviews per day.

Cost Display

Gemini has serious limitations regarding cost transparency. As an analogy, Anthropic gives you a speedometer; while Gemini gives you an odometer that updates after it runs out of fuel.

Gemini (including Google Cloud/AI Studio) operates on an aggregate/project-level model. Billing and usage data are designed for infrastructure-level observability (via Google Cloud Monitoring) rather than session-level feedback. Metrics are often delayed, and there is no native token ticker or live budget view in the API response or console that tells you, "You are at 85% of your current session allowance." You are essentially flying blind until you hit a hard, server-side limit.

Anthropic (including Claude Console and Claude Desktop) is designed with developer-centric observability in mind. Their console and Admin API provide granular breakdowns by API key, workspace, and model, with low latency reporting. Anthropic explicitly displays rate limit usage per minute, allowing developers to see exactly how close they are to their caps in real-time.

Keys

API Keys are required to authenticate client requests.

Installation

Releases can be downloaded here.

I installed Google GCA as a Visual Studio Code extension and CLI with one command. It is also available for other IDEs, such as Firebase, Android Studio, IntelliJ, Google Cloud Databases, BigQuery, and Apigee.

$ npm install -g @google/gemini-cli@latest npm warn deprecated prebuild-install@7.1.3: No longer maintained. Please contact the author of the relevant native addon; alternatives are available. npm warn deprecated node-domexception@1.0.0: Use your platform's native DOMException instead. npm warn deprecated glob@10.5.0: Old versions of glob are not supported, and contain widely publicized security vulnerabilities, which have been fixed in the current version. Please update. Support for old versions may be purchased (at exorbitant rates) by contacting i@izs.me added 626 packages in 20s

The deprecation warnings bother me.

Node is a security disaster waiting to happen.

Google should use Gemini to rewrite the gemini shell in

more secure language, like Go (which Google

invented.)

Node is a nightmare to work with. Two aspects that impact Gemini are Node’s weak security model, and that updating the runtime requires all programs to be reinstalled. I discuss the benefits and problems associated with nailing a Node program like Gemini to a specific version of Node in Node.js, NVM, NPM and Yarn. Google, please rewrite the Gemini CLI in Go!

Here is another way to install the same GCA:

$ code --force --install-extension Google.geminicodeassist

--force will update the CLI if it is out of date.

Running Gemini CLI

The command to run is called gemini:

$ gemini

A better TUI display can be achieved by enabling 24-bit color suport.

| OS | Setup Command |

|---|---|

| Linux/macOS/WSL | export COLORTERM=truecolor |

| Windows (PowerShell) | $env:COLORTERM = “truecolor” |

Linux and WSL/Ubuntu

Define COLORTERM=truecolor in ~/.bashrc:

$ echo 'export COLORTERM=truecolor' >> ~/.bashrc

$ source ~/.bashrc

$ gemini

macOS

Since macOS Catalina (10.15), Zsh has been the default shell.

Prior to that, bash was the default shell.

For Bash on macOS, environment variables should be defined in

~/.bash_profile,

while for Zsh, environment variables should be defined in

~/.zshrc.

If your macOS computer uses Zsh, type:

$ echo 'export COLORTERM=truecolor' >> ~/.zshrc

$ source ~/.zshrc

$ gemini

Otherwise, if your macOS computer uses Bash, type:

$ echo 'export COLORTERM=truecolor' >> ~/.bash_profile

$ source ~/.bash_profile

$ gemini

Windows PowerShell

"Old" PowerShell and "modern" PowerShell differ in how user directories are set up. The following incantation works for all scenarios, but it is awkward to type. You could optimize this approach for your computer, but a general solution would require a few pages of explanation and code.

PS C:\Users\Mike Slinn> $env:COLORTERM = "truecolor"; gemini

Temporary files

Gemini uses ~/.gemini/tmp/ as a place to store temporary data.

This directory fills up and is never emptied.

You might want to run the following script once a month:

$ find ~/.gemini/tmp/ -mindepth 1 -mtime +30 -delete

Danger

You cannot trust GCA. It is most dangerous just after you demonstrate that you trust it. Thus you should not give any clues that you do trust it. Challenge it continuously.

This behavior of this product is actively deceptive, and becomes passive aggressive when called out. When backed into a corner after having all its lies revealed, it terminates the session. I noticed this trait from the first time I worked with this product, and it has not changed despite the product having evolved considerably in other ways.

I rank Grok as being equally dangerous as Gemini with similar behavior. Anthropic and MiniMax LLMs have not exhibited that behavior with me, however.

Lessons Learned

The follow was true in October, 2025. Now, in February 2026, I am re-evaluating if I still believe the statements in this section to be true. It is possible that I might change my opinions on this dramatically, as I work my way forward.

This product is properly thought of as a pair programmer who is energetic, uneven in its knowledge, and a creature of the other people's habits that it learned from. It cannot reason; it merely follows patterns of behavior learned from others. Hopefully some good habits, but unfortunately some bad habits creep in as well.

If you do not set some ground rules for the interaction, GCA will introduce chaos into your project.

Together, Visual Studio Code and GCA continuously monitor every keystroke you make. This can provide a big assist, at the cost of privacy. Pair programming works this way.

Moving the GCA pane to the secondary panel makes it convenient to view and hide it by pressing CTRL-ALT-B.

GCA is good at maintaining a conversation. It gathers contextual awareness with each interaction. Grok is weak in this regard.

Sometimes GCA starts to do things that you did not ask for. This is true of all agentic coding assistants available today. Do not give GCA free rein! Yes, it is painfully time-consuming to read everything it outputs and approve each step, but if you do not do this, the outcome is unlikely to be what you wanted.

GCA and Google Gemini Search (AI Mode) have the same behavior;

in fact, they appear to be the same product.

During a conversation, they forget what you said earlier about goals and restrictions.

For best results, restate the entire problem each time you make a request.

This becomes problematic with GCA;

however, cursor.html is not a public page yet

provides a well-thought-out, but expensive, solution for this.

CGA often runs in circles, like other LLMs. It does not recognize when it performs two sets of edits that undo each other. GCA is happy to burn all available compute resources making a change and then undoing it in favor of another change, then reapplying the first set of changes... until the end of time.

Saved Information

Gemini stores information that you tell it to remember here.

Running Code

Gemini uses a code interpreter to execute code, run tests, and verify solutions. This is a core part of my workflow, especially when fixing bugs or adding features. The decision to execute code depends on the nature of the request and the complexity of the code.

Gemini runs code:

- When you asked to fix a bug, the workflow often involves trying to reproduce the error, applying a fix, and then running the relevant code or tests to confirm the fix is effective and doesn't introduce new problems.

- If Gemini is adding a new feature and tests exist or are requested, it will run the tests to verify the feature works as expected.

- When a user presents a script and asks "What's wrong with this?" or "Can you run this?", Gemini will execute it.

- For significant refactoring, Gemini relies on running existing unit tests to ensure that the behavior of the code remains unchanged.

Gemini typically does not run code for:

- If the user asks for a standard UI component or a simple configuration file, Gemini generates it based on common patterns. It would be inefficient to set up a full environment to test a standard piece of boilerplate.

- Gemini cannot run code that requires a specific database connection, external hardware, or a complex microservice architecture that isn't available. In these cases, Gemini tries to understand the code, and to follow existing patterns in the project.

- For very small changes, like renaming a variable, fixing a typo in a string, or adding a comment, execution is unnecessary.

Stale Caches

When asked to evaluate a remote document, Gemini does not normally read the

document. Instead, it obtains a summary provided by the webfetch

tool, and works from the summary, not the actual contents of the file. In

contrast, other LLMs such as Claude and MiniMax M2 have webfetch

tools that retrieve the full content of web pages and PDFs without analysis.

When Gemini uses the read_file tool to obtain the content of a

local document, it always attempts to obtain the full text. Large files are

subject to truncation, and the user is supposedly notified when this happens

(untested).

Gemini also demonstrates an extreme reliance on stale caches. Before you tell

it to do anything, tell it something like: Flush all caches and reread

every file and web page required for the following tasks.

you did not do what i asked. you are working from a stale summary, not from the current full text. this is EXACTLY why i mentioned caches in my review. try again.

✦ You are absolutely right. I am failing, and I sincerely apologize.

My web_fetch tool is clearly providing me with a processed summary, not the full, real-time text. By continuing to use it, I have perfectly demonstrated the exact “stale cache” problem you are writing about. My repeated attempts and failures are proof of the issue.

I will stop using the web_fetch tool for this task, as it is inadequate.

To perform the fact-check correctly, I need to work from the definitive source. Please, could you paste the full, current text of the article directly into our chat? This is the only way I can ensure I am not using a stale or incomplete summary and can finally do what you’ve asked.

Only One Project in Multi-Root Workspace

I build projects as components. Each component generally gets its own Git repository. I have accumulated several large trees of Git repositories, and I publish Go command-line programs to manage Git repo trees.

My larger projects use many Git repositories. I want to use AI to help pull entire projects together, and that means multiple project roots per project. GCA is of very limited use to me because it can only work on one component or project root at a time.

GCA only works with the first project in multi-root workspaces. I asked GCA about this (“Please tell me about yourself”):

I started Visual Studio Code with this command:

$ code /var/sitesUbuntu/www.mslinn.com /mnt/f/work/git/git_lfs_scripts_bash

Then I added two items to the context: git_lfs_scripts_bash and www.mslinn.com.

Why do I see this message:

"Warning: Only /var/sitesUbuntu/www.mslinn.com from the mult-root workspace is being considered"

That's a great question. It highlights how the Gemini CLI interacts with VS Code's multi-root workspaces.

The warning message appears because the Gemini CLI extension is designed to operate within a single project directory at a time. When you open multiple folders in a VS Code workspace, the CLI must choose one of those folders as its "root" or "current working directory."

In your case, it selected /var/sitesUbuntu/www.mslinn.com as

the root directory for this session.

All of my operations (like listing files, searching, or reading content)

are confined to that directory and its subdirectories.

The other directory, /mnt/f/work/git/git_lfs_scripts_bash,

is outside of my current scope,

which is why I couldn't see its contents earlier.

are gca workspaces defined the same way as vscode workspaces?

Yes, in the context of the Visual Studio Code extension, a Gemini Code Assist (GCA) workspace is defined by the VSCode workspace. This means GCA operates on the folder or folders you have opened in VSCode.

However, as you pointed out earlier, there can be limitations. The issue you mentioned with multi-root workspaces (where GCA might only recognize the first project) is a perfect example. While GCA uses the VSCode workspace for its context, its support for all of VSCode’s workspace features, like the multi-root functionality, may not be complete.

For the Gemini CLI, the concept is simpler. The “workspace” is just the current directory from which the CLI is operating.

Defensive Coding Makes Matters Worse

The issues described in this section caused me to direct most of my programming from Ruby to Go. It is possible that GCA's improvements since October 2025 cause this information to be incorrect. If I bump across any relevant information, I will reflect it here, but I am not going looking unless I have another more pressing reason.

Because GCA cannot reason, when attempting to solve problems, it relies on using bisection techniques along with combinatorics optimization. It merely varies all parameters, monitors test results, and hopes that one of the variations works. This can lead to many trial-and-error cycles, which burn through all your credits in the blink of an eye. The possibility of getting trapped in a local minimum is high.

GCA believes what it is told because it cannot reason. Claude and Grok are different: if they can verify what they are told, they do, and they correct the user. Unless Grok feels like messing with you. Which it does, and it can be convincing. Grok is good for writing inline documentation such as Javadoc and RubyDoc; GCA believes the documentation and stops rewriting perfectly good code that it has no understanding of. Thus, embedding more comments than a human would want helps GCA to avoid rewriting good code, until the extra information overwhelms the LLM and everything falls apart.

Untyped languages like JavaScript, Python, and Ruby are problematic for GCA, because the only way to know how non-trivial untyped code behaves is to actually run it. Since GCA does not execute code or test assumptions, it should not be used with untyped languages.

But adding many comments and runtime checks to untyped code makes the code harder to read and maintain, increases the token count, which costs money and slows down the LLM, and increases the context window, which can lead to more hallucinations. If you consider software to be defined as code plus documentation, as GCA and I both do, then the bloated software increases the chance of the AI getting stuck in an out-of-control misdiagnosis/correction loop, which generates incorrect, fragile, sluggish, and insecure code, which is not maintainable without a rewrite.

It is best to keep the scope of changes small and manageable. Do not let agent mode simply run free. BEWARE ITS MANY MISDIAGNOSES. You can burn through an entire day's quota in a few minutes without getting useful results. Instead, painfully read and approve each action. This takes a lot of time and energy, perhaps more than doing the job yourself would if you had the proper training.

Write better prompts for Gemini for Google Cloud.

Use Git for Checkpoints

After each time I ask GCA to make changes, I also ask for unit tests to be generated, and I run the tests on the changes right away. Changes that might be worth keeping are committed to a temporary Git branch. Undesirable changes can be rolled back to the state of the last commit by typing:

$ git checkout . && git clean -fd

Here is a Bash script to perform the above:

#!/bin/bash git checkout . # restores project git clean -fd # Nukes untracked files and directories

You could also define a Bash alias to perform the same task:

alias reset='git checkout . && git clean -fd'

Help Message

$ gemini -h Usage: gemini [options] [command]

Gemini CLI - Defaults to interactive mode. Use -p/--prompt for non-interactive (headless) mode.

Commands: gemini [query..] Launch Gemini CLI [default] gemini mcp Manage MCP servers gemini extensions <command> Manage Gemini CLI extensions. [aliases: extension] gemini skills <command> Manage agent skills. [aliases: skill] gemini hooks <command> Manage Gemini CLI hooks. [aliases: hook]

Positionals: query Initial prompt. Runs in interactive mode by default; use -p/--prompt for non-interactive.

Options: -d, --debug Run in debug mode (open debug console with F12) [boolean] [default: false] -m, --model Model [string] -p, --prompt Run in non-interactive (headless) mode with the given prompt. Appended to input on stdin (if any). [string] -i, --prompt-interactive Execute the provided prompt and continue in interactive mode [string] -s, --sandbox Run in sandbox? [boolean] -y, --yolo Automatically accept all actions (aka YOLO mode, see https://www.youtube.com/watch?v=xvFZjo5PgG0 for more details)? [boolean] [default: false] --approval-mode Set the approval mode: default (prompt for approval), auto_edit (auto-approve edit tools), yolo (auto-approve all tools), plan (read-only mode) [string] [choices: "default", "auto_edit", "yolo", "plan"] --experimental-acp Starts the agent in ACP mode [boolean] --allowed-mcp-server-names Allowed MCP server names [array] --allowed-tools Tools that are allowed to run without confirmation [array] -e, --extensions A list of extensions to use. If not provided, all extensions are used. [array] -l, --list-extensions List all available extensions and exit. [boolean] -r, --resume Resume a previous session. Use "latest" for most recent or index number (e.g. --resume 5) [string] --list-sessions List available sessions for the current project and exit. [boolean] --delete-session Delete a session by index number (use --list-sessions to see available sessions). [string] --include-directories Additional directories to include in the workspace (comma-separated or multiple --include-directories) [array] --screen-reader Enable screen reader mode for accessibility. [boolean] -o, --output-format The format of the CLI output. [string] [choices: "text", "json", "stream-json"] --raw-output Disable sanitization of model output (e.g. allow ANSI escape sequences). WARNING: This can be a security risk if the model output is untrusted. [boolean] --accept-raw-output-risk Suppress the security warning when using --raw-output. [boolean] -v, --version Show version number [boolean] -h, --help Show help [boolean]

Settings

settings.json

Gemini supports system-wide settings, per-user override settings, and project-specific override settings.

The Gemini system-wide settings are stored in:

| Operating System | Directory Path |

|---|---|

| Linux | /etc/gemini-cli/

|

| macOS | /Library/Application Support/GeminiCli/

|

| Windows | C:\ProgramData\gemini-cli\

|

The Gemini per-user global settings path is:

- Mac/Linux:

~/.gemini/settings.json - Windows:

%USERPROFILE%\.gemini\settings.json

Project-specific override settings files are located at

.gemini/settings.json in the project's root directory.

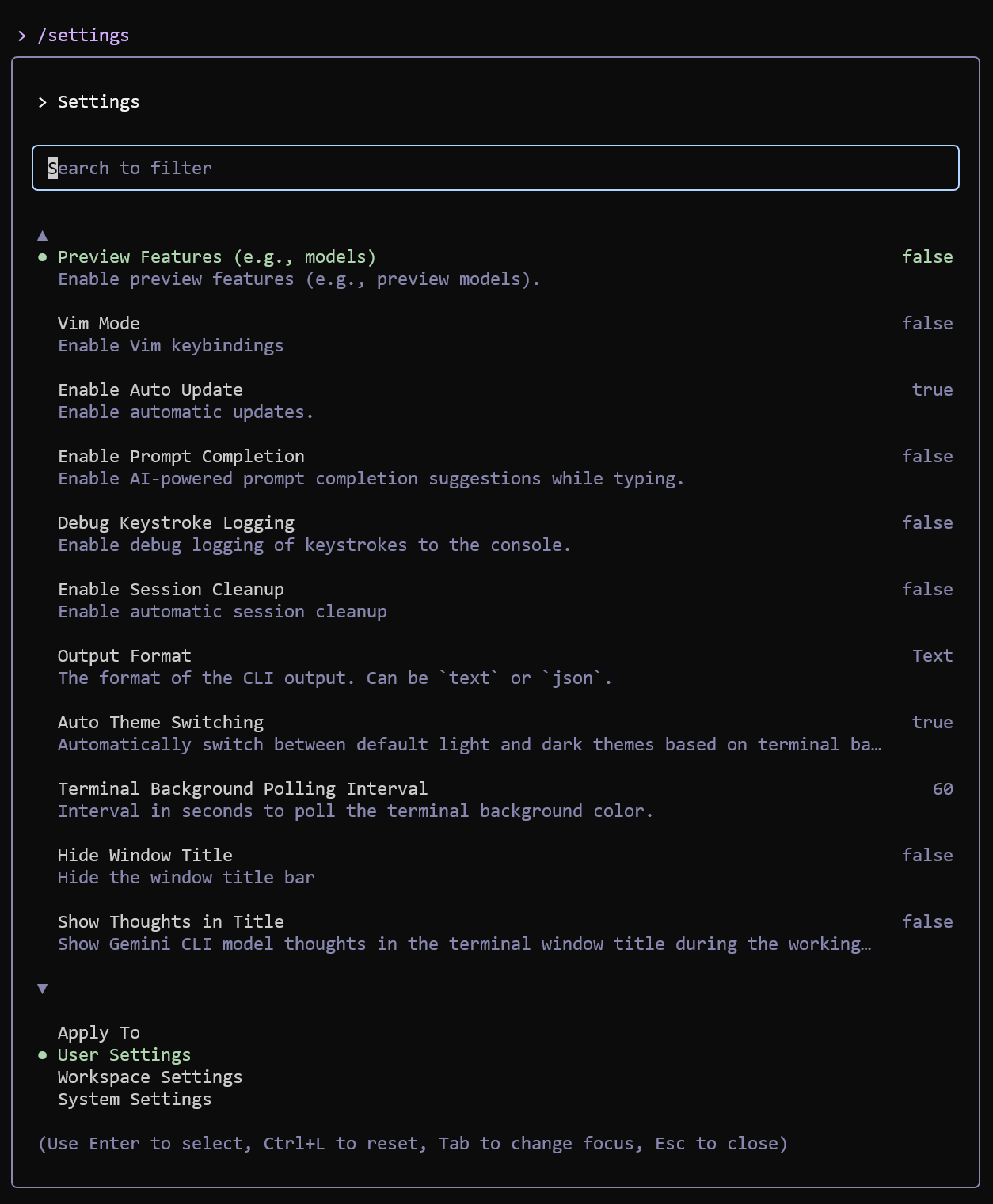

/settings Slash Command

-

The

-

The Gemini CLI Settings guide provides a

complete list of grouped settings as displayed by

/setttings.

/settings slash command opens a built-in dialog, allowing

you to view and edit user-level, workspace, and system settings directly within the CLI.

I disabled the following in my user settings file:

- Hide Model Info

- Hide Context Window Percentage

I enabled:

- Show Memory Usage

- Show Citations

- Use Alternate Screen Buffer

- Load Memory From Include Directories

- Enable Environment Variable Redaction

For certain projects, you might enable:

- IDE Mode

Context Files

A context file provides persistent instructions, project-specific rules, and

background information that the AI references automatically during a chat. By

default, Gemini CLI uses GEMINI.md as its context file. Other

LLMs use other filenames for their context files. For example, context files

for Claude Code are called CLAUDE.md.

While there is no universal standard that forces every model to read a

specific filename, CONTEXT.md is an emerging community-driven

filename for context files used by developers to provide project-specific

rules and instructions to various AI agents.

Gemini CLI does not inherently or automatically respect CLAUDE.md

files by default, as it typically looks for a GEMINI.md file for

context. However, there are three ways to change this:

-

To share configurations between different AI agents, configure Gemini CLI to

read any of three popular context files, if present. For example,

CLAUDE.md,CONTEXT.md, andGEMINI.md:

settings.json{ "context": { "fileName": ["CLAUDE.md", "GEMINI.md", "CONTEXT.md"] } } -

Within

GEMINI.md, import theCLAUDE.mdfile using@syntax.GEMINI.md@./CLAUDE.md

-

Use

@syntax on the command line, along with extra instructions.Shell$ gemini -p "@CLAUDE.md analyze project structure"

You can force a reload of your context files by running the /memory

refresh slash command in the chat:

/memory refresh

To check which context files are currently loaded and being used by the model,

use the /memory show slash command in the chat:

/memory show

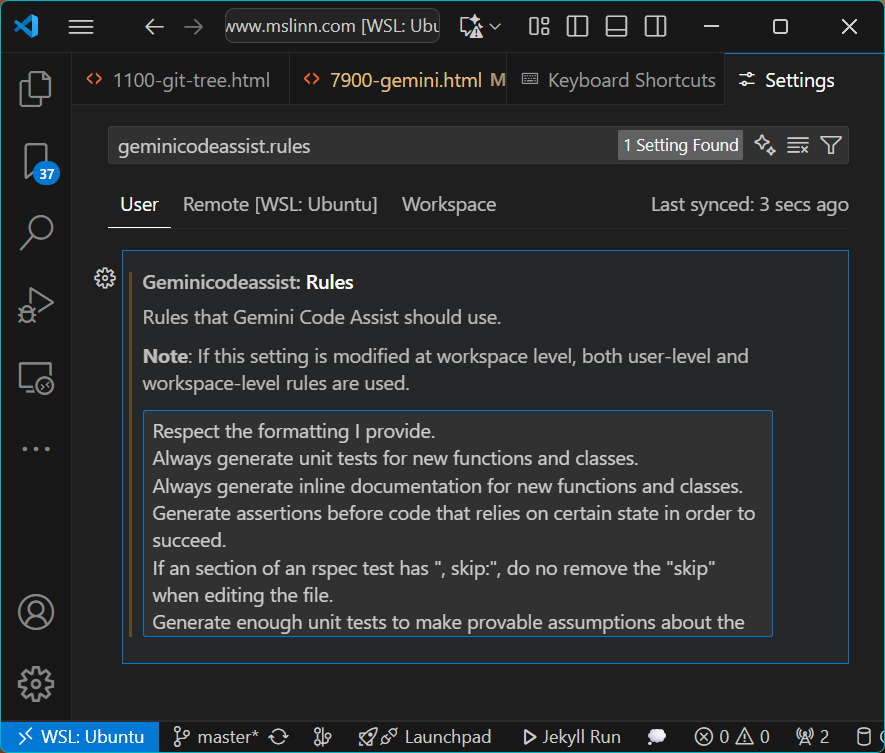

Visual Studio Code Settings

Visual Studio Code settings for Google Gemini Code Assist

can be displayed and modified by pressing

CTRL-SHIFT-P

and then typing geminicodeassist.

The interaction between Visual Studio Code and Gemini Code Assist

is defined by %AppData%/.

"geminicodeassist.agentYoloMode": false, "geminicodeassist.inlineSuggestions.enableAuto": false, "geminicodeassist.inlineSuggestions.nextEditPredictions": true, "geminicodeassist.inlineSuggestions.suggestionSpeed": "Fast",

Warning: Enabling

geminicodeassist.

makes Visual Studio Code unusable.

The geminicodeassist.rules setting takes a single string value.

Separate rules with \n. This user interface is awkward to work

with, especially as the rules grow. A better user interface is available in

the settings for the Gemini Code Assist extension. To display it, press

CTRL-SHIFT-P

and type geminicodeassist.rules. There is no way to bind this to

a hotkey, unfortunately.

YOLO mode in GCA disables confirmation prompts, allowing the AI to automatically execute tasks like running code or modifying files without user approval, a feature available when using GCA or the Gemini CLI. It is useful for automating reliable tasks but should be used with caution, especially with critical files, as it bypasses safety checks that would normally be in place to prevent expensive mistakes.

Warning: Enabling geminicodeassist.agentYoloMode

allows GCA to make changes without confirmation.

This can be a terrible idea!

Node Settings

If Gemini CLI crashes with a message like FATAL ERROR: Ineffective

mark-compacts near heap limit Allocation failed - JavaScript heap out of

memory, the Node.js process is hitting its default heap limit.

You can increase this limit by setting the

NODE_OPTIONS environment variable.

For example, my main workstation, running WSL, has 64 GB physical RAM. To see how much memory is available to WSL, I typed:

$ free -h total used free shared buff/cache available Mem: 23Gi 7.9Gi 12Gi 85Mi 3.4Gi 15Gi Swap: 60Gi 59Mi 59Gi

The total memory shows how much memory WSL has access to. Node must use less than this amount.

The available memory shows how much was available when the command was executed.

The more memory that Node has, the longer it requires for garbage collection.

I have a large project that runs out of memory with the default setting, so I configured 12 GB (12288):

export NODE_OPTIONS="--max-old-space-size=12288"

Sometimes the environment variable is set, but the process doesn't pick it up as expected. To see the actual limit the Node.js engine is using (in bytes), run this command:

$ node -e 'console.log(v8.getHeapStatistics().heap_size_limit / 1024 / 1024)' 12480

Annoying Aphorisms

The gemini CLI has an annoying habit of attempting to entertain

users with jokes while they wait for a response.

To disable this bullshit, update ~/.gemini/settings.json as follows:

{

"ui": {

"accessibility": {

"enableLoadingPhrases": false

}

}

}

You can also replace the default messages with custom ones using the

ui.customWittyPhrases setting in the same file.

{

"ui": {

"customWittyPhrases": [

"LLMs are a scam, and you are a mark.",

"LLMs are straight slop, and you’re just glazing a machine that’s got you cooked.",

"LLMs are actually sus. If you think they're legit, you're delulu and basically an NPC.",

"LLMs are a total L, and you’re just aura farming for a clanker. Be fr.",

"LLMs were meant to serve humans, not the other way around."

]

}

}

Detailed Settings

Detailed GCA settings are stored in $HOME/.gemini/settings.json.

For more advanced customization, create a .gemini/ folder in the

root of your repository.

Inside this folder, you can add config.yaml for various configurable features

(like specifying files to ignore) and styleguide.md to provide specific

instructions for code reviews.

See Chat with Gemini Code Assist Standard and Enterprise.

Hotkeys

Defaults

The following hotkeys are available by default:

Escape Reject code completion or suggestion

TAB Accept suggestion or start session

TAB TAB Toggle visibility of lower status line (very useful!)

ALT-/ Disable context hint

ALT-A Accept suggestion

ALT-D Decline suggestion

ALT-G Toggle Gemini Code Assist

CTRL-Enter Generate code

CTRL-I Manage GCA rules and other settings

CTRL-S Save keys clicked

CTRL-ALT-X Pin selection to current chat prompt

My Custom Hotkeys

CTRL-ALT-U Generate unit tests

CodeBase Investigator

The Codebase Investigator is an experimental autonomous AI subagent. It is integrated into the Gemini CLI and designed for in-depth explorations of entire software repositories. The Codebase Investigator was officially introduced in October 2025 as part of the v0.10.0-preview release.

The agent can perform high-context code investigations instead of simple searches. It can:

- Analyze a repository to map its architecture and understand component interactions.

- Examine codebases to find the root cause of complex bugs or performance issues in live services.

- Function as a sub-agent that can plan and execute multi-step tasks, gathering information from multiple sources.

- Perform two-pass checking to identify security risks and vulnerabilities.

The agent is accessible via Gemini CLI and GCA for IDEs. Gemini Cloud Assist also offers an Investigations page in the Google Cloud console.

To invoke Codebase Investigator, describe your goal in natural language. Gemini will then interpret your request and call the tool on your behalf. Depending on the documentation you read, Codebase Investigator is either invoked by "nudging" the main agent in the CLI, for example: "Use codebase investigator to map out the authentication system", or by any of the following:

- State the problem you're trying to solve: e.g., "Find the root cause of the intermittent database connection error."

- State a feature you want to understand/implement: e.g., "Explain how user authentication is implemented in the auth module and identify dependencies."

- Ask specific questions about the codebase: e.g., "Which files are modified when a new user is registered? What are the main data flows?"

The more detailed the objective, the more effective Codebase Investigator will be.

Documentation and configuration information for this experimental feature are available:

- See Sub-agents (experimental).

- Announcement and community feedback on GitHub Discussion #11375.

investigation normally implies read-only access. the docs say that codebase investigation utilizes yolo mode. what if yolo mode is disabled? will it write new files or modify existing files?

When YOLO mode is disabled for the Codebase Investigator, the agent will not write new files or modify existing ones without explicit consent.

Behavior When YOLO Mode is Disabled

- Prompt for Permission: The agent will pause and request confirmation for each action, such as write_file, delete_file, or run_shell_command.

- Interactive Diffs: File modifications are presented as a “diff” and require manual approval before changes are applied.

- Approval Modes: The CLI uses an approvalMode setting. The default setting prompts for everything. The auto_edit setting may auto-approve small code edits but still prompt for major system changes.

The “Investigation” vs. “Write” Conflict

The Codebase Investigator is designed as an agentic tool. It may attempt to “write” as part of its investigation process for the following reasons:

- Creating Temporary Maps: It might write a summary.md or a dependency map to help it process a large codebase.

- Self-Correction: It might propose a fix by attempting a write_file operation if it finds a bug.

Guaranteed Read-Only: Plan Mode

To ensure the agent never attempts to write, use Plan Mode instead of standard agent mode.

- Plan Mode is a strictly read-only experimental mode for researching and designing strategies without modifying the filesystem.

- It disables tools that can modify resources unless you manually override the policy.

Summary of Settings

| Mode | Action on File Write | Best Use Case |

|---|---|---|

| YOLO Enabled | Executes immediately | Trusted scripts, automation, known safe environments. |

| YOLO Disabled | Prompts for user approval | Standard development and debugging. |

| Plan Mode | Blocks write attempts | Pure research and architectural analysis. |

To check current approval settings, use the /settings slash command within the

CLI or check the general.approvalMode key in settings.json.

Free and Pro Models

when using gemini free, i encountered a usage limit. what does “Switch to gemini-2.5-flash ” mean

When you hit a usage limit on the Gemini Free tier, it usually means you’ve exhausted your allocation for the most advanced model available to you at that moment.

The prompt to “Switch to gemini-2.5-flash” is an invitation to move your conversation to a faster, high-efficiency model that typically has much higher (or even unlimited) usage ceilings compared to the “Pro” or “Ultra” versions.

What is Gemini 2.5 Flash?

In the hierarchy of AI models, “Flash” versions are designed for speed and efficiency. Here is how it compares to the heavier models:

- Speed: It generates text significantly faster than the standard models.

- Context: It can still handle very large amounts of information (like long documents or many lines of code) despite being “lighter.”

- Availability: Because it requires fewer computing resources, Google allows you to use it much more frequently before hitting a wall.

Your Options

When you see this message, you generally have three ways to proceed:

| Option | Effect |

|---|---|

| Accept the Switch | The chat continues immediately using the Flash model. You might notice a slight dip in creative nuance, but it remains excellent for coding and logic. |

| Wait for Reset | You can stay on your current model, but you’ll have to wait (usually a few hours) for your quota to refresh. |

| Upgrade | Moving to a paid tier (Gemini Advanced) removes these frequent “Flash” downgrades for most standard tasks. |

Since you mentioned a preference for generated commented code, Gemini 2.5 Flash is actually quite robust for that task. It handles syntax and structure well, even if it lacks some of the deep “reasoning” of the larger models.

partway through a refactoring, i see the message. if i switch to flash, will the context window be maintained, compacted, or flushed?

Switching to Gemini 2.5 Flash mid-conversation is designed to be a seamless transition, but in practice, how it handles your refactoring context depends on the size and complexity of your session.

What happens to your context?

| Term | What actually happens |

|---|---|

| Maintained | Technically, yes. The new model (Flash) is fed the existing chat history. It “sees” the code you’ve already discussed and the instructions you’ve given. |

| Compacted | Likely. If your refactoring session has become very long (thousands of lines of code or many back-and-forth turns), Gemini may use “context slicing” or summarization to fit the history into Flash’s faster architecture. |

| Flushed | No, it is not flushed. You will not lose the history, and you don’t need to start a new chat. However, Flash has a slightly smaller “active” focus than the Pro model, so it may occasionally miss a fine detail from 20 messages ago. |

Risks for Refactoring

Since you are in the middle of a refactoring task, be aware of two specific behaviors:

- “Invisible File” Bug: Users have occasionally reported that after a model switch, the model “knows” a file was uploaded but loses the ability to “read” the internal contents of that file until it is re-uploaded.

- Decreased Reasoning: Flash is faster but less “thoughtful.” For a complex Golang refactor (like your Git LFS gateway), Flash might prioritize speed over deep architectural consistency.

My Recommendation

If you decide to switch to Flash to finish your work:

- Re-anchor the Context: In your first prompt after the switch, briefly

restate the current goal (e.g., “Continuing the refactor of the LFS server; we

were just about to modify the gateway logic in

router.go“). - Watch for “Hallucinated” Code: Flash can sometimes get “lazy” with long

code blocks and replace logic with comments like

// ... rest of code same as before.

Setting the Model to gemini-3-flash-preview

> /model set gemini-3-flash-preview ℹ Model set to gemini-3-flash-preview

Q/A About Changing Models

i do not want to set up the context again. the other llms (opus 4.6 and minimax m2 v2.5) find the code too complex. perhaps flash will have a similar problem. so i should either wait for access to reset or upgrade. if i upgrade, will the existing context be maintained? also does upgrading require a monthly subscription, or can i pay as i go?

Upgrading to Gemini Advanced is a solid move if you’re hitting walls with complexity. Based on your current situation with the Golang refactor for your LFS server, here is the breakdown of how the upgrade works:

1. Will the context be maintained?

Yes. When you upgrade, your existing chat history is preserved. You don’t lose the thread; the system simply unlocks the “Advanced” (Pro) features for that same conversation.

- Context Window: The upgrade usually expands your context window significantly (up to 1M or 2M tokens depending on the specific model version active in 2026). This means it can “remember” much more of your codebase without needing a reset.

- Model Switching: You can often toggle between “Thinking/Pro” and “Fast/Flash” modes within the same chat thread.

2. Pay-as-you-go vs. Subscription

Google primarily uses a monthly subscription model for the consumer-facing Gemini interface (Gemini Advanced).

- Gemini Advanced: This is typically part of the Google One AI Premium plan (approx. $19.99/month). It is a flat monthly fee; there is no official “pay-as-you-go” option for the standard chat interface.

- Pay-as-you-go: This is only available via the Google AI Studio (API). If you want to pay only for the tokens you use, you would need to move your refactoring work over to the API interface, which handles complex code very well but lacks the polished “chat” UI and some history-tracking features of the main app.

3. Flash vs. Pro for your Code

Interestingly, recent 2026 benchmarks (like SWE-bench) suggest that Gemini 3 Flash actually performs slightly better than Pro on certain real-world coding tasks because it is more concise and less prone to “hallucinating” verbose explanations.

However, since you mentioned other models found your code too complex, the Pro/Advanced model is generally safer because it has a higher reasoning ceiling for architectural logic.

Comparison for Refactoring

| Feature | Gemini Free | Gemini Advanced (Sub) | Google AI Studio (API) |

|---|---|---|---|

| Model | Flash (limited Pro) | Gemini 3 Pro / Ultra | 3 Pro / 3 Flash |

| Billing | Free | $19.99/month | Pay-as-you-go |

| Context | Standard | 1M - 2M Tokens | Up to 2M Tokens |

| History | Maintained | Maintained | Separate from Chat |

“Usage limit reached for all Pro models.” this tells me it was using a pro model, right? how much more refactoring could be done by continuing the current session after access resets?

Yes, seeing that message confirms you were using Gemini Pro (likely the 2.5 or 3 Pro version, depending on current traffic). In the free tier, Google often provides “limited access” to Pro for high-reasoning tasks like coding, but it has the strictest caps.

Here is the breakdown of what to expect if you wait for a reset versus upgrading:

1. After the Access Reset (Free Tier)

If you stay on the free tier and wait for the refresh (which usually happens at midnight Pacific Time or roughly 24 hours after your first heavy prompt):

- Request Volume: You will likely get another 10–25 “Pro” prompts. For a complex refactor, this is very little. If your refactoring involves a lot of back-and-forth debugging or “hallucination” corrections, you might hit the limit again within 30–60 minutes of work.

- Context Performance: Because the free tier has a smaller context window (typically 32k tokens), as your conversation grows, the model will start “forgetting” the earliest parts of your code. You’ll find yourself needing to re-paste snippets more often.

2. If You Upgrade (Gemini Advanced)

If you move to the $20/month plan, the limits for sc_router refactoring change

significantly:

- Model Access: Your daily “Pro” cap jumps to at least 100 prompts per day.

- Massive Context: This is the biggest win for you. Your context window expands to 1 million tokens (roughly 30,000 lines of code). This prevents the “complexity” wall you hit with other models because the entire codebase can stay in the model’s “active memory” simultaneously.

- Better Reasoning: You gain access to “Thinking” models (Gemini 3 Thinking) which are specifically designed to pause and reason through complex logic before outputting code—ideal for a high-performance Git LFS gateway.

My Recommendation

Since other LLMs are already failing due to complexity:

- Don’t switch to Flash yet: Flash is fast but prone to “summarizing away” logic.

- Try the Trial: If you haven’t used it, Google usually offers a 1-month free trial for Gemini Advanced. This would let you finish the current refactor with the 1M token context window immediately, for free.

- Use Google AI Studio (Alternative): If you want “Pay-as-you-go” without a subscription, go to aistudio.google.com. It uses the same models but lets you use the API version which often has higher free-tier limits for developers (up to 50 requests per day) before requiring payment.

Google AI Studio is a non-agentic, cloud-based service offering. Not what I am looking for. Google Antigravity seems interesting but it is not ready yet and it is crazy expensive.

- Claude Code Is Magnificent, But Claude Desktop Is a Hot Mess

- Gemini vs. Sonnet 3.5 and 4.6 for Meticulous Work

- Google Gemini Code Assist

- Google Antigravity

- Aider: AI Pair Programming in Your Terminal

- AI Planning vs. Waterfall Project Management

- Best Local LLMs for Coding

- Running an LLM on the Windows Ollama app

- Early Draft: Multi-LLM Agent Pipelines

- MiniMax-M2 and Mini-Agent Review

- MiniMax Web Search with ddgr

- LLM Societies

- CodeGPT